Latest Sessions

View All Sessions

S15 | Planner Aware Path Learning in Diffusion Language Models Training

Fred Zhangzhi Peng presents Planner Aware Path Learning (PAPL), a simple modification to the masked diffusion loss that aligns training with planner-based inference, yielding large gains on protein modeling, text generation, and code.

In today's session, Fred Zhangzhi Peng presents a study of a fundamental mismatch in diffusion language models between training and planner-based inference. While planners enable faster, more flexible generation by selecting non-uniform denoising paths, standard training assumes uniformly random trajectories, leading to a misaligned objective. The talk introduces a theoretical analysis showing that the standard ELBO fails under planning, and proposes a new Planned ELBO (P-ELBO) along with Planner Aware Path Learning (PAPL), a simple modification to the masked diffusion loss that aligns training with inference. Empirically, PAPL yields strong gains across domains, including ~40% improvement on protein modeling, up to 4x MAUVE gains in text generation, and +23% on HumanEval pass@10 for code generation.

S14 | One-step Language Modeling via Continuous Denoising

Flow-based Language Models (FLMs) replace factorized ancestral sampling with sample-level continuous transport via flow matching, and can be distilled into a flow map language model that generates in as few as one step, matching 8-step discrete diffusion quality with an ~8.3× speedup.

Language models based on discrete diffusion have shown promise for parallel generation, but they suffer from factorization error that causes sharp quality degradation in the few-step regime. To overcome this, Flow-based Language Models (FLMs) move from factorized ancestral sampling to sample-level continuous transport via flow matching. FLMs are high-performing through principled design choices such as a decoding-error-based time reparameterization. To enable few-step generation, the paper introduces the two-time denoiser, a novel reparameterization of the flow map that provably lies on the probability simplex, allowing the authors to distill FLM into a flow map language model (FMLM) via cross-entropy. FMLM transports noise to data in as few as one step, outperforming recent few-step discrete diffusion models and matching their 8-step quality at one step with an approximately 8.3× speedup.

S13 | The Diffusion Duality, Chapter II: Ψ-Samplers and Efficient Curriculum

Justin Deschenaux presents a family of Predictor-Corrector samplers for discrete diffusion models that generalize prior approaches to arbitrary noise processes and, unlike conventional methods, continue to improve as the number of sampling steps increases.

In today's session, Justin Deschenaux presents The Diffusion Duality, Chapter II: Ψ-Samplers and Efficient Curriculum. This work introduces a family of Predictor-Corrector (PC) samplers for discrete diffusion models. The method generalizes prior approaches to arbitrary noise processes and, when combined with uniform-state diffusion, overcomes the limitations of ancestral samplers. Unlike conventional methods, these samplers continue to improve as the number of sampling steps increases. The framework is evaluated on language and image modeling tasks, achieving lower perplexity on OpenWebText and improved FID/IS scores on CIFAR-10. Additionally, the work introduces a memory-efficient training curriculum that reduces training time and memory usage while maintaining strong performance.

Featured Videos

View All Videos

How did diffusion LLMs get so fast?

Techniques for accelerating diffusion LLMs, from self-distillation and curriculum learning to KV caching and block diffusion

This video discusses techniques for making diffusion LLMs faster, including self-distillation through time, curriculum learning, confidence scores for unmasking, guided diffusion (FlashDLM), approximate KV caching (dLLM-Cache, dKV-Cache), and block diffusion.

But How Do Diffusion Language Models Actually Work?

Jia-Bin Huang explores several ideas for applying diffusion models to language modeling

Most Large Language Models (LLMs) today are based on Autoregressive models (i.e., they predict texts in a left-to-right order). But diffusion models offer iterative refinement, flexible control, and faster sampling. In this video, we explore several ideas for applying diffusion models to language modeling.

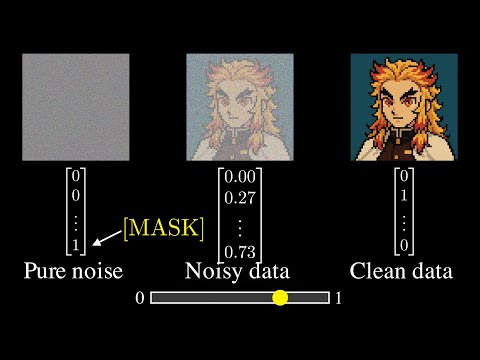

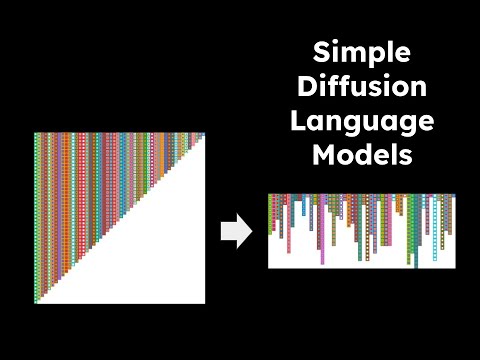

Simple Diffusion Language Models

Quick introduction to Masked Diffusion Language Models (MDLM) by Alexander Rush

Quick introduction to Masked Diffusion Language Models (MDLM) by Alexander Rush

About the Reading Group

Diffusion LLMs are faster, more controllable successors to traditional LLMs and are rapidly gaining adoption. This reading group builds a community for exchanging and debating emerging ideas in this space. While our primary focus is discrete diffusion models for language, we also welcome work on other modalities and applications, such as molecular design, drug discovery, and beyond.

Meet the Organizers

Subham Sahoo

Holds a Ph.D. from Cornell Tech, where he specialized in Diffusion Language Models. He has made foundational contributions to the field, with his work deployed at scale by Google, NVIDIA, and ByteDance across language generation and drug discovery.

Justin Deschenaux

PhD student in Machine Learning at EPFL, advised by Prof. Caglar Gulcehre. Previously interned at Apple MLR. His research interests include diffusion language models, fast generative models, and generalization.